- Bass and Bytes/

- Articles/

- Amazon S3 Files: Application Modernization Made Easy with Native NFS Access to S3/

Amazon S3 Files: Application Modernization Made Easy with Native NFS Access to S3

Table of Contents

“You mustn’t be afraid to dream a little bigger, darling.” — Tom Hardy (Eames), Inception

I remember the first time I saw S3, and heard the explanation of what object storage is. To me, it seemed strange, and I thought: “I like the concept and the technology, but how can I integrate it with all the applications already out there?” Googling to satisfy my curiosity, I found out I wasn’t alone: since then, Object Storage adoption has grown, and people have been trying to solve the same problem: how can we make applications that only understand files work with data stored as objects in buckets?

There are a lot of community projects trying to solve this ancient issue: rclone, s3fs-fuse, s3backer, goofys, you name it, but, unfortunately, none of them offer a proper production-grade POSIX file system backed by S3.

S3fs-fuse runs in userspace and is slow; s3backer has a good caching mechanism, but consistency is an issue, and data can be corrupted if something goes wrong; rclone is great for syncing, it has a great caching mechanism, but it lacks a mechanism to lock files across multiple hosts.

But beyond the “how do I mount S3 as a drive” problem, there’s a bigger question that keeps coming up in our projects: how do you modernize a legacy application that speaks files, when the data should live in S3?

The Real Problem: Modernizing Applications That Speak Files #

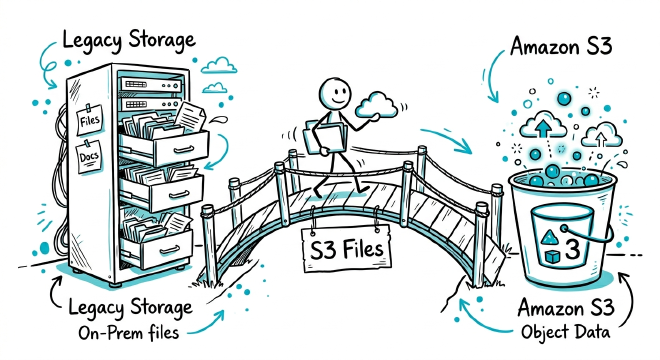

If you have a legacy application (maybe on-premises) that uses files and needs to be refactored, you know the pain, because the traditional modernization path looks like this:

- Migrate all data to S3

- Rewrite the application to use the S3 API

- Maintain a sync mechanism during the transition, dealing with custom dual sync mechanisms

- Cut over

Step 1 takes a lot of time, and steps 2 and 3 are where projects usually get stuck. You can’t just flip a switch because you need the old application to keep reading and writing files while the new components start using the S3 API. That means having data duplicated in two places, and the need to build a sync mechanisms, while praying that nothing goes out of alignment. I’ve seen many projects get stuck or get significantly delayed because the data migration was tangled with application refactoring.

What if you can migrate all the data once, and refactor the application at your own pace, with both old and new components accessing the same data through different interfaces?

On the 31st of March 2026, AWS announced Amazon S3 Files, and that’s exactly what it enables.

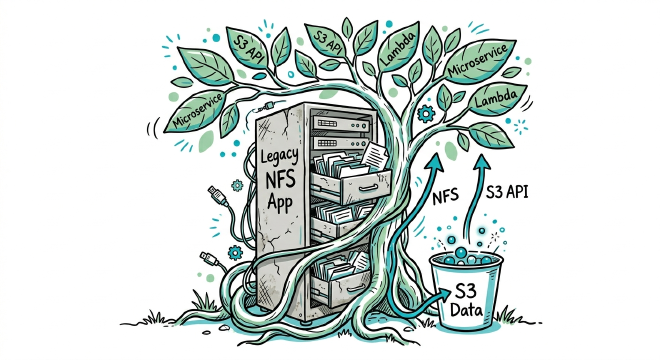

Strangler Fig for Storage #

We already saw the strangler fig pattern for applications behind a custom load balancing mechanism and authentication, now we have another option to modernize applications! I call this approach the strangler fig for storage.

With S3 Files, the modernization path becomes:

- Migrate your data to S3 (a well-understood process)

- Mount the S3 bucket as a file system using S3 Files

- Point your legacy application to the new NFS mount

- The application keeps working, no code changes needed

- Refactor your application incrementally, service by service, to use the S3 API directly

- As each component moves to native S3 access, the file system handles less traffic

- Eventually, decommission the file system mount

This is the strangler fig pattern applied to storage rather than services. Your data lives in one place (S3), and you provide two access paths: NFS for legacy components and the S3 API for modernized components. No data duplication, no sync nightmares, no big-bang migration.

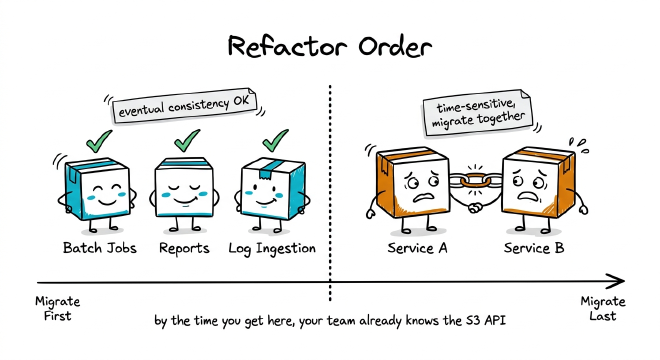

Planning the Refactor: Start with What Can Wait #

The bi-directional sync will help you with the refactor, but you need to plan to take into account the fact that writes are batched, not instant. So, when a component writes a file via NFS, it could take minutes before the change is visible on S3.

This means you can’t just refactor components in random order because you need to think about which parts of your application are time-sensitive and which ones aren’t.

You can start migrating the components that are tolerant of eventual consistency, like batch processing, report generation, log ingestion, basically anything that operates on files that could be written minutes or hours ago. These components can safely switch to the S3 API anytime without particular order, because by the time they process a file, the sync has already happened or, if you are unlucky, the next time the process will run, data will be updated.

With this approach you can leave the time-sensitive functions for later, refactoring them together. Let’s say that service A writes a file and service B needs to read it immediately: you have to migrate both A and B to the S3 API at the same time, otherwise the sync delay might bite you and your users. Even if it takes more effort to migrate two or more components at the same time, the development team has already got their hands dirty with S3, so they already know what to do. Also, the time-sensitive components will be likely lesser than everything else, so the refactor could quite be at the end of the process.

In practice, you have to map your data flows before you start. Identify which components write, which ones read, and how much latency each is tolerable: the components with the loosest timing requirements can be refactored first, also to acquire familiarity with the new APIs, the tightly coupled ones go last.

One more thing: EventBridge notifications triggered on every sync can help you; they can be used to build a coordination layer: when a file is synced to S3, it will fire a notification, and a modernized consumer can react to that instead of polling. In practice, you’re moving from “read the file when you need it” to “process the file when it’s ready,” that is a better pattern anyway.

Now that we’ve seen why S3 Files matters for modernization, let’s look at what it actually is and how it works under the hood.

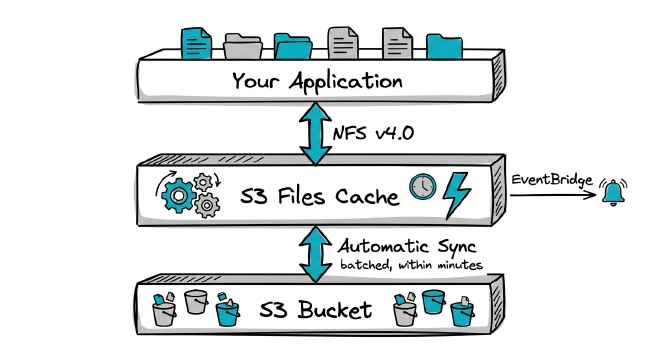

What is Amazon S3 Files? #

S3 Files is a new AWS resource that provides a fully managed, high-performance shared file system, giving you native NFS (v4.0+) access to your S3 data. It does not implement a filesystem layer on the client side: it provides a real NFS file system backed by S3 data as a new resource type. You provision it from the S3 console or AWS CLI.

You can create a file system from any S3 bucket or prefix, so no data migration is required: files and directories reflect what’s in the S3 bucket, changes are visible to file applications, and everything syncs bi-directionally between the bucket and the file system.

Because it’s based on S3, you can count on the “usual” 11 9s of durability, and in addition, it’s multi-AZ, serverless, elastic, and it supports POSIX, Access Points, and a CSI driver for Kubernetes. I know, it seems too good to be true!

I have to say that I am not a big fan of NFS because of its locking mechanisms, its implementation (good luck if you happen to lose the connection to your remote filesystem), but it’s better than nothing. My dream is that sometime in the future we will have a simple, distributed, and fast file system that will not eat IOPS only for metadata access and with proper locking, but for now, let’s keep things simple.

S3 Files Performance: Sub-Millisecond Reads, Terabits of Throughput #

By the specs, its performance is really good: we’re talking about ~1ms for file reads, as low as 2.7ms for writes, and aggregate read throughput that can reach multiple terabits per second. Per-client read throughput reaches 3 GiBps. You cannot build a relational database on it, but hey, there’s Aurora and RDS for that!

Also, note that these aren’t EFS-level typical numbers, also for metadata. Metadata is pre-warmed to reduce latency, so operations like cd into a directory and running ls on thousands of files don’t take minutes. If you’ve ever waited for an ls on a large NFS mount, you know what I’m talking about (aaah, the constant feeling of having to restart your SSH session because of an ls, with the fear of a system freeze. Good old, but still actual, times!)

How S3 Files Synchronization Works #

S3 Files doesn’t just dump everything into the file system and call it a day. There’s an intelligent synchronization layer with smart defaults.

It acts as a man-in-the-middle between the file system and S3 (and the volume of data stored in this layer determines the service cost). When you add an S3 Files resource, you get this “cache” managed by AWS; it’s like having a fully managed, highly available, and elastic storage gateway you don’t have to upgrade and configure.

By default, files and directories reflect the contents of your S3 bucket. Metadata syncs immediately for fast file operations. Small files (under 128KB) are loaded when the directory gets accessed, and reads for larger files are optimized for high throughput. The system automatically offloads data based on the number of days since the last file access, with a default of 30 days (that’s a good thing to lower your AWS bill).

Not everything is perfect: some aspects aren’t suitable for every use case. Writes are batched within minutes, so consistency is still an issue. Luckily, versioning needs to be enabled on the bucket: when files sync back to S3, a new version of the object is created, so if there are conflicts, you can fix them (but be careful: you don’t want to manually fix production data or, worse, have inconsistencies). When a new version of an object is written to the system, S3 Files triggers an EventBridge notification, so you can also build event-driven pipelines even when the workload is file-based!

Access Patterns #

There are two modes for importing data into the file system:

- On directory: brings in data on the first directory listing. This is a pre-load approach, good when you know you’ll need the files in a given path.

- On file read: brings in data only when a specific file is actually read. Lazy loading, basically.

Fine-Grained Sync Control #

Data loading and sync are customizable: there are smart defaults, but you can change the parameters depending on your workload. You can define how long to keep data in the file system (1 to 365 days), when to import based on your access patterns (on directory read or on file read), and the object size threshold to import based on your latency needs. There’s also an on-demand import option you can trigger from the CLI to hydrate specific data into the file system.

| Sync Type | Days to Store | Import When | File Size |

|---|---|---|---|

| Default Sync | 30 | On directory read | 128KB |

| Custom Sync | 1 to 365 | On directory OR on file read | Configurable |

| On Demand Import | 1 to 365 | At CLI run time | Configurable |

S3 Files vs. s3fs-fuse, Storage Gateway, and S3 Mountpoints #

We’ve seen s3fs-fuse, rclone, or s3backer as community implementations, but even in the AWS ecosystem, there were already options for accessing S3 data via NFS, and none of them were ideal for every scenario.

Mountpoints for Amazon S3 translates local file system calls into S3 API calls. It is opensource and managed, but its usage is limited to streaming workloads, such as analyzing huge datasets for machine-learning tasks, it cannot modify existing files or delete directories, for example. It’s intended only for a subset of specific workloads.

AWS Storage Gateway (File Gateway) provides cached file access to S3 data, serving it through NFS or SMB, but it requires an additional appliance (hosted on EC2 instances or on-premise virtual machines), but it does not offer high availability, since there’s no clustering solution. It also has a limitation on Windows file permissions: they are saved on Objects metadata attributes, so you cannot have more than 9 access control list entries for Windows.

How to Mount S3 as a Filesystem with S3 Files #

To set up S3 Files you need to:

- Create the file system from your S3 bucket (via console or CLI)

- Create a mount target in your VPC subnet (like you would normally do for EFS)

- Install the efs-utils package or nfs userspace tools on your instance

- Mount the file system

There’s a new set of IAM permissions for S3 Files, so you’ll need to update your policies accordingly. CloudFormation support is available at launch.

S3 Files Pricing #

S3 Files introduces its own pricing components in addition to the existing S3 pricing; it charges for read and write operations and capacity for hot data stored on the file system. You can read pricing details and scenarios in the “files” section in the pricing page

Direct large file reads from S3 bypass the charges entirely (you will pay for S3 APIs). So as you modernize components to use the S3 API directly, you naturally reduce your S3 Files costs. The pricing model aligns with the modernization journey.

Final Thoughts #

For years, the “file vs. object” choice felt like an architectural fork in the road: pick one and deal with the consequences. Now you don’t have to.

The modernization angle is what got me. S3 Files lets you untangle the data migration from the application refactoring. Move your data once, refactor at your own pace.

If you’re dealing with a legacy application that understands only files, and you’ve been dreading the S3 migration, this is worth considering.

Have you been facing the file-vs-object dilemma? What legacy app is keeping you tied to on-prem storage right now? Drop a comment below, I’d love to hear about your use case.