How a Billing Bug Made Me Owe $58,000 to AWS (For a Week)

Table of Contents

This is the story of how a bug in Amazon Bedrock’s OpenAI-compatible API layer made my bill skyrocket to $58,000. But it’s also the story of how understanding what you’re doing can turn a terrifying situation into a positive outcome for everyone. It came a week before the Gemini billing issues reported by Truffle security that left developers with billings higher than mine. As you’ll see, it ends differently

I was running some tests with AI models on Amazon Bedrock, spending $3 to $5 a day, feeling responsible, monitoring costs daily, and setting up budget alarms as a good cloud citizen. Then one day, I received two budget notifications in my mailbox. Not for $100. Not for $500, but for $56,265.59. And I also received a forecast alarm for $70,161.62

Here’s how it went.

The setup: testing autonomous agents on Bedrock #

I’m an AWS Community Builder. Other than meeting people and speaking at events, I test things (mainly AWS and cloud-related) to share what I learn. In February 2026, I started experimenting with autonomous agents. The plan was simple: test a few models on Amazon Bedrock with OpenClaw, compare their behaviors, identify the gotchas, learn how to avoid mistakes and problems, and write about it.

I started my journey with OpenClaw with DeepSeek v3.2 in the eu-north-1 region. Everyone else uses Claude, but I want to experiment with something different, and I wanted to use the native Bedrock Converse APIs. I also gave OpenCode a spin for a while to test autonomous coding with Amazon Nova Lite. Everything was working fine, costs were predictable, and life was good.

Then Kimi K2.5 by Moonshot AI landed in the Bedrock model catalog. A new model with good benchmarks, only $0.60 per million input tokens and $3.00 per million output tokens. I had to try it.

I started testing Kimi K2.5 with the native Bedrock APIs on February 16th. But I quickly ran into issues: the OpenClaw Node.js library was behaving oddly with Kimi’s tool usage implementation. Calls were failing, and responses were inconsistent; sometimes, no action was taken after a request. Not a great start.

So I did what any curious nerd would do: read the documentation (ssht, don’t tell anyone, it’s a secret: we’re not wizards, we are only good at reading things). That’s where I discovered Project Mantle.

For those unfamiliar, Project Mantle is Amazon Bedrock’s distributed inference engine that provides OpenAI-compatible API endpoints.

It was announced at re:Invent 2025, and the idea is simple: change the endpoint in your existing code to https://bedrock-mantle.<region>.api.aws/v1, generate a Bedrock access token, and everything will work in the same way, with the only difference being the use of Bedrock for inference.

On February 17th, I switched to the Mantle endpoint in us-east-1 and continued my tests. Same agent, similar prompts, same usage pattern. Just a different API layer. I didn’t experience any of the issues I had before: tools were invoked, and the conversation was consistent. The only anomalies I noticed may have been due to the model’s behavior itself.

I was doing everything right with cloud costs. Or so I thought.

I was logging into my organization’s main account daily to check billing, and I had a budget alarm set at $100 to warn me on the AWS account I was using to run OpenClaw experiments, where I generated the Bedrock access token. I also added an additional forecast alarm.

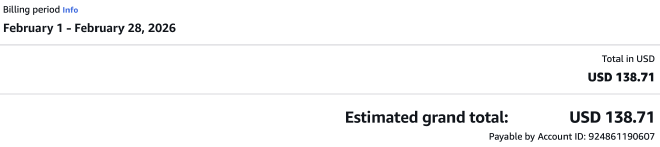

Costs were showing $3 to $5 a day, consistent with what I’d seen with DeepSeek. On February 23rd, the billing for all my organization (including other accounts and services) showed about $140 for the month. Nothing alarming, and managed by my existing AWS Credits.

Then came Tuesday, February 24th. Right after lunch, I received two AWS Budget Notifications simultaneously. The actual cost notification said I had exceeded my $100 budget, and the actual amount was $56,265.59. The forecast notification was even more cheerful: $70,161.62 projected for the month.

My first reaction was the totally rational response of almost having a heart attack. My second reaction was to start investigating, because I wanted to know what went wrong and what I didn’t know.

Finding the issue #

When I looked at the billing breakdown for us-east-1, I could not believe my eyes. The Kimi K2.5 Mantle input tokens alone showed 77,228,206 1K tokens at $0.0006 per 1K, totaling $46,336.92.

The output tokens totaled 195,679 1K tokens at $0.003 each, for $587.04. These figures were without taxes, hence the total of $58,000!

I immediately checked pricing, if there was something “hidden” in the documentation, or if I had hit a bug in OpenClaw, or if I hadn’t considered reasoning tokens. The model usage price is the same whether you are using native Bedrock APIs or project Mantle.

Remembering that OpenClaw tracks token usage on its side, I started my analysis.

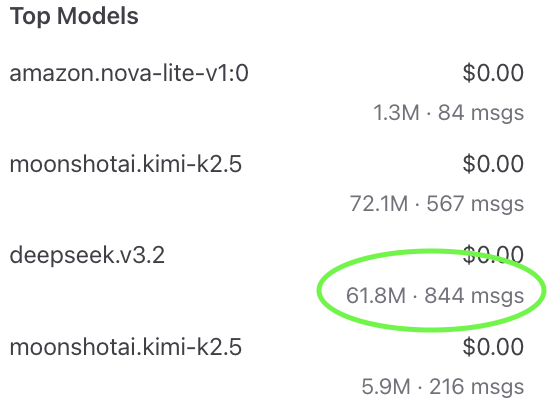

I saw that, according to its metrics, the Kimi K2.5 usage through Mantle was about 72.1 million tokens across 547 messages. That’s 72.1 million tokens, not 72.1 million thousand tokens.

I cross-checked with other models’ usage: DeepSeek v3.2 on native Bedrock APIs showed 61,910.027 1K tokens in the billing console. OpenClaw reported 61.8 million tokens across 844 messages, that matched: 61.8 million tokens divided by 1,000 equals roughly 61,800 1K tokens.

Amazon Nova Lite usage also matched the billing console and OpenClaw’s metrics, but for Kimi K2.5 through Mantle, the billing console was reporting 77,228,206 1K tokens. If my actual usage was about 77 million tokens, it should have been 77,228 1K tokens. It seemed to me that something treated raw token counts as 1K counts, inflating everything by a factor of 1,000.

So, instead of $46.34, I was being billed $46,336; a comma in the wrong place, and suddenly I owe more than a new car. Luckily, my AWS bill total still didn’t resemble a phone number, as Corey Quinn says.

What made this worse was the complete absence of early warning signals. CloudWatch metrics for model invocations showed activity, but the token usage metrics through the Mantle endpoint weren’t populating at all. As of when I filed my report, the CloudWatch dashboard still showed no token usage data for the Mantle API calls.

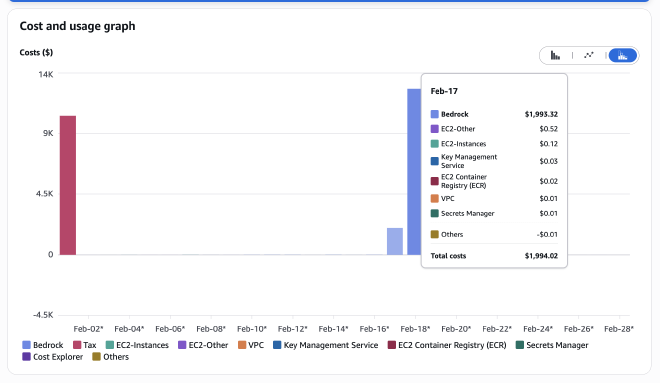

The billing data for Mantle usage also appeared to be delayed and was then dumped all at once, bypassing the alarm threshold notifications that would have made me stop testing days earlier, hence the sudden budget notification even with a $100 treshold.

Cost Explorer, after a week, showed that the anomaly started on February 17th, the exact day I switched to Mantle. But I didn’t receive a notification until the 24th. A full week of silence.

Acting in good faith #

Being an AWS Community Builder means you care about things beyond your AWS account: you want things to work better for everyone, and you do this with other kind human beings who invest their free time to care for others.

When I spotted that 1,000x discrepancy, I didn’t just panic and open a support ticket for a billing complaint: I started analyzing things to see what went wrong. I know from experience that acting in good faith pays off, not only for you, but also for the people who’ll run into the same issue.

Since I had all the data, I built a report showing the pattern: native Bedrock APIs reported tokens correctly; Mantle did not; the numbers were clear; and the 1,000x factor was consistent.

I filed a detailed support case with AWS, walking through every comparison point.

It took some time due to a spike in requests (I am using the basic support plan), they took it seriously, and the chat I had with Uzma, the support representative who managed my case, made me feel heard. I shared with him my concerns and he reassured me that he would pass all the information to the team for a deeper analysis.

After that chat (which happened on Saturday), the billing was corrected on Tuesday, and the bug was addressed. My billing was back to normal, and it felt both magical and relieving.

The service team fixed the bug, and now it will be fixed for everyone.

As a Community Builder, I had people I could reach out to, also to check what were the options or knowing if I could help with explaining things: I contacted Corey Strausman, the CB community manager on the Community Builders Slack workspace, and even though he was absolutely swamped reviewing new CB applications and keeping the community running, he took the time to reassure me that he’d help get the issue in front of the Mantle team if needed.

That kind of human response, someone telling you “I’ve got your back if this doesn’t get resolved,” makes the difference between feeling helpless and going mad when you’re staring at a five-figure bill.

In the end, AWS Support handled it on their own, but knowing Corey was ready to step in gave me peace of mind during a pretty stressful week.

This is what acting in good faith looks like in practice: I wasn’t trying to abuse the system or get out of legitimate charges, and I had the data to prove it was a token accounting error that produced impossible numbers. AWS recognized this and acted on it, even before I got a warning about a failed transaction on my monthly bill (my credit card has a far lower transaction and total credit limit).

I want to be clear: I think Project Mantle is a solid addition to Amazon Bedrock: the ability to use OpenAI-compatible APIs with Bedrock models is genuinely useful, especially for migration scenarios. It also features a Zero Operator Access design, indicating that AWS is taking security seriously at the infrastructure level.

This bug was an accounting issue, not a fundamental flaw. It got caught, it got fixed, and hopefully the monitoring gaps are being addressed, too. That’s how software gets better.

If you’re experimenting with AI models on any cloud platform, here is my advice (it worked for me, you should try it, trust me :) )

Monitor from your application, not just the console. You can spot bugs easily because if your application has one, you’ll spot it. If you are using too many tokens, you can see them in the console and trace the issue. Even if everything seems fine and you hit a billing bug, you have the figures to document it. The billing console and CloudWatch should be your safety net, not your only source of truth.

Set meaningful budget alarms. My $100 alarm was good. Having intermediate alarms at $20, $50, and $75 would have been better, assuming the billing data had arrived on time.

Be careful with what you don’t know. Project Mantle is a great piece of engineering, and I’m going to keep using it. New services may come with new bugs, and early adopters are the ones who find them. That’s not a reason to avoid new things. It’s a reason to be extra vigilant when you try something new or unfamiliar.

Document everything. When you find an issue, a clear, data-driven report makes the difference. Don’t just say “my bill is wrong.” Show the math.

Back to testing! #

My billing is back to normal, and I’m back to trying things and writing about them. OpenClaw with Bedrock has been a wild ride so far, and I’ve barely scratched the surface.

I’ve got a dedicated article in the making about my experience with OpenClaw: stay tuned for that one, because I gave “him” AdministratorAccess to an AWS Account in my organization, asking to automate tasks and implement new things. It genuinely surprised me how it addressed the tasks.

If you’re an early adopter testing new or unfamiliar services, keep your eyes open and your monitoring tight. If you find a bug, report it with data. And if you’re part of a community, don’t be afraid to ask for help.

Have you ever had a billing scare? I’d love to hear your stories. Drop a comment below, or find me on LinkedIn. And if you’re using Project Mantle, maybe double-check your token counts. Just in case :) (no, I’m kidding, everything is fixed now!)