My self-hosting setup: Tailscale, Caddy, Let's Encrypt, and Route53

Table of Contents

I wanted to self-host Immich and KaraKeep on my Raspberry Pi at home. Simple enough, right? Well, not quite. At that time my ISP used double NAT, so port forwarding wasn’t even an option (and, as we saw, we’re not ready for IPv6, yet). I also didn’t want to expose anything to the public internet: I just wanted my services accessible to me, from anywhere, with proper HTTPS and meaningful domain names without having to configure another VPN and maintain the public exposed service.

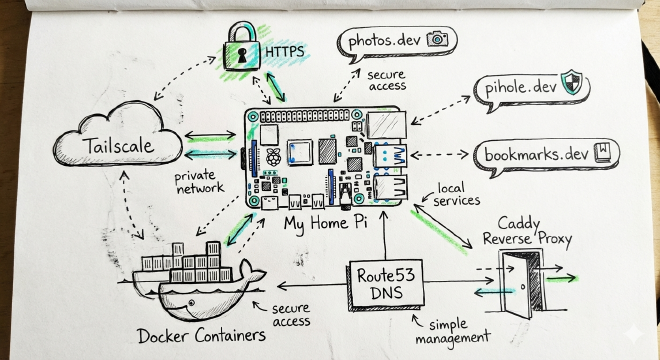

So I built a setup using Tailscale for private networking, Caddy as a reverse proxy, Let’s Encrypt for certificates generation and renewal via Route53 DNS challenges, and a bunch of Docker containers running on a humble Raspberry Pi. It’s been running reliably for two years now.

Why this setup? #

Here’s the problem I was trying to solve: I wanted to host my services without having to memorize ports, strange paths or remembering naming conventions. For example: photos.example.com should go to my Immich instance, bookmarks.example.com should go to my karakeep installation, and so on. If I switch from one bookmark app to another, I want to keep the same DNS name. I have to remember too much stuff in and outside work, I have to make my life easier with these tools.

I also wanted everything to be:

- Private: Only accessible via Tailscale, not the public internet

- Secure: Proper HTTPS with valid certificates (no annoyng browser warnings)

- Simple: Add a new service? Just update the Caddyfile, no Route53 changes needed

- Lazy-friendly: If you read my bio, you’ll see that contains the fact that I’m lazy, and this while setup is the confirmation The most important thing: because I’m running services mostly on Docker, I don’t want to remember every port mapped to my applications!!

The architecture #

My setup uses these components:

- Tailscale to form a private mesh VPN that connects all my devices. Why I don’t use an open-source soultion? It came after my setup, and right now this managed service is fine for me, even if I’m still on the free tier

- Route53 to manage my DNS domainI could have used CloudFlare, but I already have an AWS account

- Let’s Encrypt for generating free SSL/TLS certificates. It already has a plugin that integrates with route53

- Caddy as a reverse proxy handling HTTPS termination and routing to services

- Docker: to run all the actual applications (Immich, Pi-hole, etc.)

The flow looks like this:

My device (on Tailscale)

↓

photos.lazypenguin.dev (Route53)

↓

Tailscale IP → Caddy (port 443)

↓

Docker container (Immich on port 2283)

What I’m running right now #

Here’s what’s currently behind this setup:

- Immich (

photos.lazypenguin.dev): Self-hosted photo management, like Google Photos but actually yours - Karakeep (

bookmarks.lazypenguin.dev): Bookmark manager (might switch to Linkwarden later, but the DNS name stays the same) - OctoPrint (

3dprinter.lazypenguin.dev): 3D printer management with webcam streaming - Node-RED (

nodered.lazypenguin.dev): Home automation flows - n8n (

n8n.lazypenguin.dev): Workflow automation - Pi-hole (

pihole.lazypenguin.dev): privacy for my entire home, with tracker blocking

Tip: If you use Pi-hole as your dns for Tailscale, you can use it on every device, even when you’re not at home, even for your phone (you have to install TailScale and connect to the TailNet, but this article assumes you will do that :) )

Why not Caddy’s built-in Tailscale certificates? #

Caddy has excellent integration with Tailscale and can automatically get HTTPS certificates for your Tailscale machine name. So why didn’t I use that?

Because Tailscale only gives you a certificate for your machine name, not for arbitrary subdomains.

You’d get https://homeserver.youdomain.ts.net, and then you’d have to use subpaths like:

https://homeserver.youdomain.ts.net/photoshttps://homeserver.yourdomain.ts.net/bookmarkshttps://homeserver.yourdomain.ts.net/pihole

This works fine for many applications, but Immich is… particular. It doesn’t work nicely with subpath deployments. I searched on the GitHub repo, and this feature isn’t still in deployment (and maybe it’s fine: there are other prioritites other than making a lazy sysadmin happy). Trying to make it work with X-Forwarded-* headers and path rewrites is a pain to handle for every application. Some of them have the same approach Immich has.

So I decided: to subdomains with my own domain and Let’s Encrypt.

1. Route53 setup: The wildcard approach #

I purchased a domain (let’s call it lazypenguin.dev) and configured a wildcard A record:

*.lazypenguin.dev → A → 100.x.y.z (my Tailscale IP)

Every subdomain, like photos.lazypenguin.dev, bookmarks.lazypenguin.dev, 3dprinter.lazypenguin.dev will point to my Raspberry Pi’s Tailscale IP.

When I add a new service, I have only to update the Caddyfile. No touching Route53. Lazy? Yes. Effective? Absolutely.

Why a public domain instead of internal DNS? #

Because I don’t want to manage an internal DNS server running on a Raspberry or, for worse, manage host files or modify my device configuration to use a particular resolver (even if with Pi-Hole this will be easier)

2. Let’s Encrypt with Route53 DNS-01 challenge #

To get Let’s Encrypt certificates for *.lazypenguin.dev, you need to prove you own the domain, usually placing a file with a string in a directory on your webserver (.well-known/acme-challenge) . Since I don’t have a webserver exposed on the internet, I can’t use http challenges, but luckily, I can use DNS challenges.

Certbot can automatically create DNS TXT records in Route53 to prove domain ownership, then get wildcard certificates.

Installing Certbot Route53 plugin #

You only need to install this package:

sudo apt install certbot python3-certbot-dns-route53

IAM configuration #

You need to create a IAM user with the permissions to modify Route53 records. You can find the policy on Certbot’s documentation page: Certbot DNS Route53 documentation

Create the user, attach the policy, and save the access key credentials in the ~/.aws/credentials file (or run aws configure.

Initial certificate request #

sudo certbot certonly \

--dns-route53 \

--preferred-challenges dns-01 \

--key-type ecdsa \

-d lazypenguin.dev \

-d "*.lazypenguin.dev"

If everything goes right, you will find your certificates in the directory /etc/letsencrypt/live/lazypenguin.dev/.

Renewal configuration #

Here’s my renewal config at /etc/letsencrypt/renewal/lazypenguin.dev.conf:

version = 2.1.0

archive_dir = /etc/letsencrypt/archive/lazypenguin.dev

cert = /etc/letsencrypt/live/lazypenguin.dev/cert.pem

privkey = /etc/letsencrypt/live/lazypenguin.dev/privkey.pem

chain = /etc/letsencrypt/live/lazypenguin.dev/chain.pem

fullchain = /etc/letsencrypt/live/lazypenguin.dev/fullchain.pem

# Options used in the renewal process

[renewalparams]

account = redacted

pref_challs = dns-01,

authenticator = dns-route53

server = https://acme-v02.api.letsencrypt.org/directory

key_type = ecdsa

renew_hook = systemctl restart caddy.service

The renew_hook is crucial:it restarts Caddy after renewal so it picks up the new certificates.

The AWS credentials quirk #

Everything went fine for two months, and then I found an issue: my certificate wasn’t renewed because Certbot’s systemd timer wasn’t finding my AWS credentials, even though manual renewals worked fine.

Even if I had my credentials file in the right place and working, I had to modify the systemd service at /etc/systemd/system/certbot.service to explicitly include the credentials:

[Unit]

Description=Certbot

Documentation=file:///usr/share/doc/python-certbot-doc/html/index.html

Documentation=https://certbot.eff.org/docs

[Service]

Type=oneshot

ExecStart=/usr/bin/certbot -q renew --no-random-sleep-on-renew

PrivateTmp=true

Environment="AWS_ACCESS_KEY_ID=YOUR_ACCESS_KEY"

Environment="AWS_SECRET_ACCESS_KEY=YOUR_SECRET_KEY"

It works, even if I don’t like this kind of solution. I still haven’t figured out why the credential chain doesn’t work properly in the systemd context, but this keeps renewals running smoothly. By the way: I don’t like having AWS static credentials around, maybe one day I will change this, but right now I’m fine with this.

3. Caddy configuration: One subdomain per service #

Now for the star of the show: Caddy. I like its simple configuration.

Here’s my Caddyfile:

bookmarks.lazypenguin.dev {

tls /etc/letsencrypt/live/lazypenguin.dev/fullchain.pem /etc/letsencrypt/live/lazypenguin.dev/privkey.pem

reverse_proxy http://localhost:3000

}

photos.lazypenguin.dev {

tls /etc/letsencrypt/live/lazypenguin.dev/fullchain.pem /etc/letsencrypt/live/lazypenguin.dev/privkey.pem

reverse_proxy http://localhost:2283

}

pihole.lazypenguin.dev {

tls /etc/letsencrypt/live/lazypenguin.dev/fullchain.pem /etc/letsencrypt/live/lazypenguin.dev/privkey.pem

reverse_proxy localhost:8084

}

3dprinter.lazypenguin.dev {

tls /etc/letsencrypt/live/lazypenguin.dev/fullchain.pem /etc/letsencrypt/live/lazypenguin.dev/privkey.pem

handle_path /webcam/* {

reverse_proxy localhost:8083

}

reverse_proxy localhost:8082 {

header_up X-Forwarded-Proto {scheme}

}

}

n8n.lazypenguin.dev {

tls /etc/letsencrypt/live/lazypenguin.dev/fullchain.pem /etc/letsencrypt/live/lazypenguin.dev/privkey.pem

reverse_proxy http://localhost:5678

}

nodered.lazypenguin.dev {

tls /etc/letsencrypt/live/lazypenguin.dev/fullchain.pem /etc/letsencrypt/live/lazypenguin.dev/privkey.pem

reverse_proxy http://localhost:1880

}

Each service is completely independent and with its own subdomain. With TailScale integration, the file would have been cleaner, if every application handled being in a subpath nicely.

Comparison with the old subpath approach #

For context, here’s what my old Caddyfile looked like when I was trying to make subpaths work:

homeserver.yourdomain.ts.net {

root * /usr/share/caddy

file_server

handle_path /pihole/* {

reverse_proxy localhost:8084

}

handle_path /3dprinter/* {

handle_path /webcam/* {

reverse_proxy localhost:8083

}

reverse_proxy localhost:8082 {

header_up X-Forwarded-Proto {scheme}

header_up X-Script-Name /3dprinter

}

}

handle_path /* {

reverse_proxy http://localhost:2283

}

}

All the handle_path directives were getting difficult to handle, because I had also to take into account rule precedence, everything is simpler now.

4. Docker configuration: Exposing the right ports #

A lot of self-hosted applications provide docker-compose ready configuration, exposing a non-privileged port. Here’s an example:

services:

immich-server:

container_name: immich_server

image: ghcr.io/immich-app/immich-server:${IMMICH_VERSION:-release}

dns:

- 1.1.1.1

- 8.8.8.8

volumes:

- ${UPLOAD_LOCATION}:/usr/src/app/upload

- /etc/localtime:/etc/localtime:ro

env_file:

- .env

ports:

- 2283:2283 # Exposed to localhost only

With caddy, I can proxy the traffic to localhost:2283 and handle https encryption, and I don’t have to remember which application is binded to which port.

Two years running, here’s what I’ve learned #

- Certbot renewal failures: The AWS credentials issue was frustrating. If renewals start failing, check the systemd service logs with

journalctl -u certbot.timer. - Wildcard certificates are powerful: One cert covers all current and future subdomains.

- Subdomain routing solves a lot of problemsr: After fighting with subpaths, everything is simple right now

- Tailscale IPs are stable: My Raspberry Pi has kept the same Tailscale IP for two years without issues. A single raspberry Pi 4 is enough for everything, but I’m booting and running everything on an external SSD. You need a backup strategy, I use Restic Backup with resticprofile, more on that on another article

Where to go next #

This setup has been rock-solid for me, if you want to experiment tell me how it goes, and if I forgot something!

Have you built a similar self-hosting setup? Are you using a different reverse proxy? Let me know in the comments or reach out on LinkedIn.